METHOD AND APPARATUS FOR VISION-LANGUAGE UNDERSTANDING

US20240144651

2024-05-02

Physics

G06V10/764

Inventors:

Assignee:

Applicant:

Drawings (4 of 31)

Smart overview of the Invention

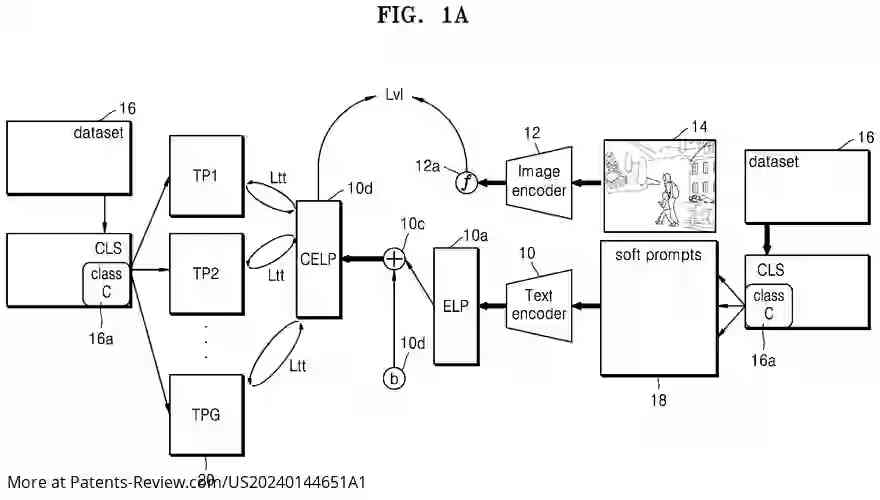

A novel computer-implemented method is introduced for training vision-language machine learning (ML) models to classify images depicting both known and novel classes. The process involves obtaining a dataset with multiple class names and training the model using augmented textual prompts. These prompts are processed through a frozen text encoder to generate text embeddings, which are then used to minimize cross-entropy losses, aligning them with learnable soft prompts. This approach aims to improve the model's ability to recognize classes without prior exposure.

Field and Background

The application addresses advancements in vision-language understanding, particularly the training of models capable of classifying images using novel categories. Recent developments in large-scale pre-training of ML models have led to sophisticated foundation models that excel in zero-shot understanding through text prompts. The shift from hand-crafted to learned prompts has highlighted the need for improved training methods to enhance model performance on new tasks.

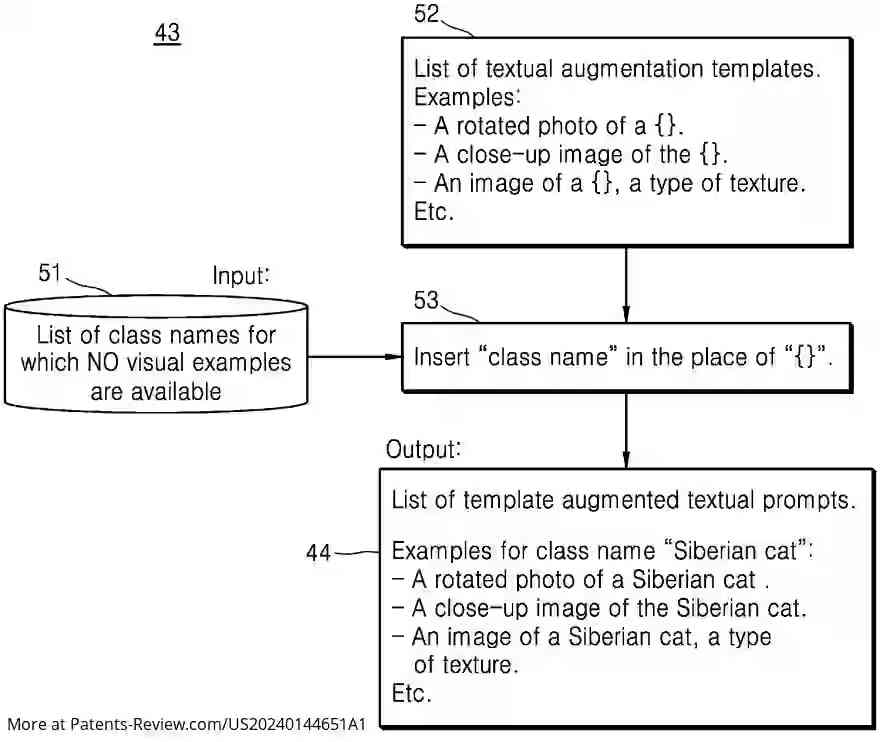

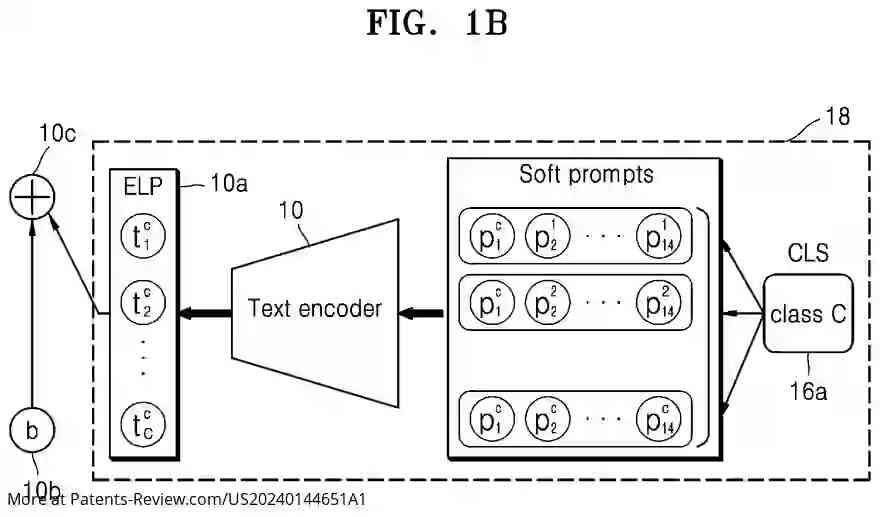

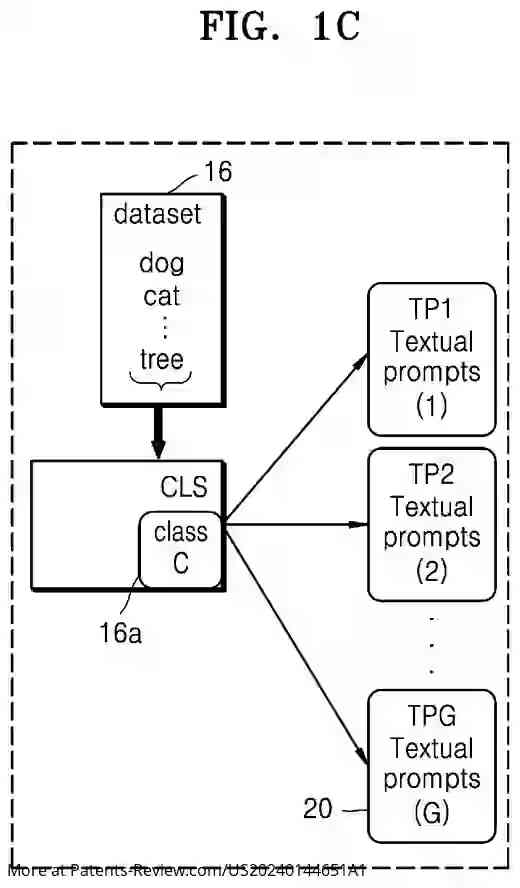

Training Methodology

The disclosed method involves generating augmented textual prompts for each class name in a training dataset, which are input into a text encoder to produce text embeddings. These embeddings are compared using cross-entropy loss with those generated from concatenated learnable soft prompts. This process refines the soft prompts, aligning them more closely with the textual embeddings, thus enhancing the model's adaptability and classification accuracy across various classes.

Apparatus for Classification

An apparatus is also described for utilizing a trained vision-language ML model to classify images. It includes an interface for receiving text data, storage for class names and embeddings, and processors that generate and compare text embeddings. This setup determines if a class is novel, adding it to the storage list if so, thereby extending the model's classification capabilities.

Contributions and Innovations

The invention addresses base class overfitting by integrating a text-to-text loss mechanism that aligns learned soft prompts with textual ones in embedding space. This approach leverages the intrinsic information captured by the text encoder, offering language-only optimization for vision-language model adaptation. The method significantly enhances prompt learning efficiency and accuracy on novel classes compared to existing techniques.