GENERATING AND PROVIDING IMMERSIVE EXPERIENCES TO USERS ISOLATED FROM EXTERNAL STIMULI

US20240160276

2024-05-16

Physics

G06F3/011

Inventor:

Applicant:

Drawings (4 of 21)

Smart overview of the Invention

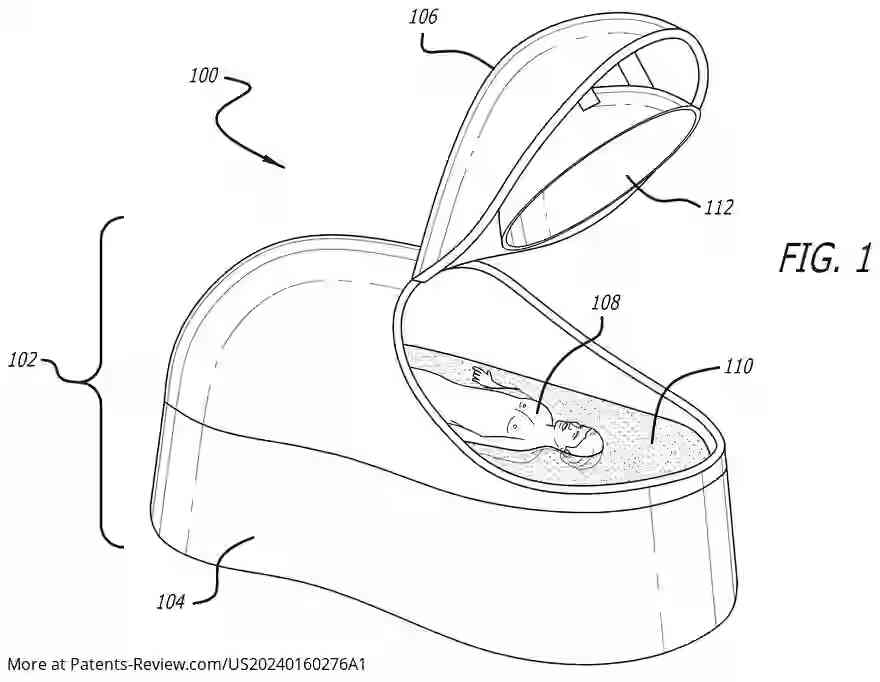

The immersive experience system is designed to provide users with interactive content while isolating them from external stimuli within an isolation chamber. Users float in a high-density suspension liquid kept at body temperature, experiencing audio, video, and tactile inputs. Interaction with the system occurs through various modalities, including eye movements, gestures, and thought inputs via a visual cortex thought recorder.

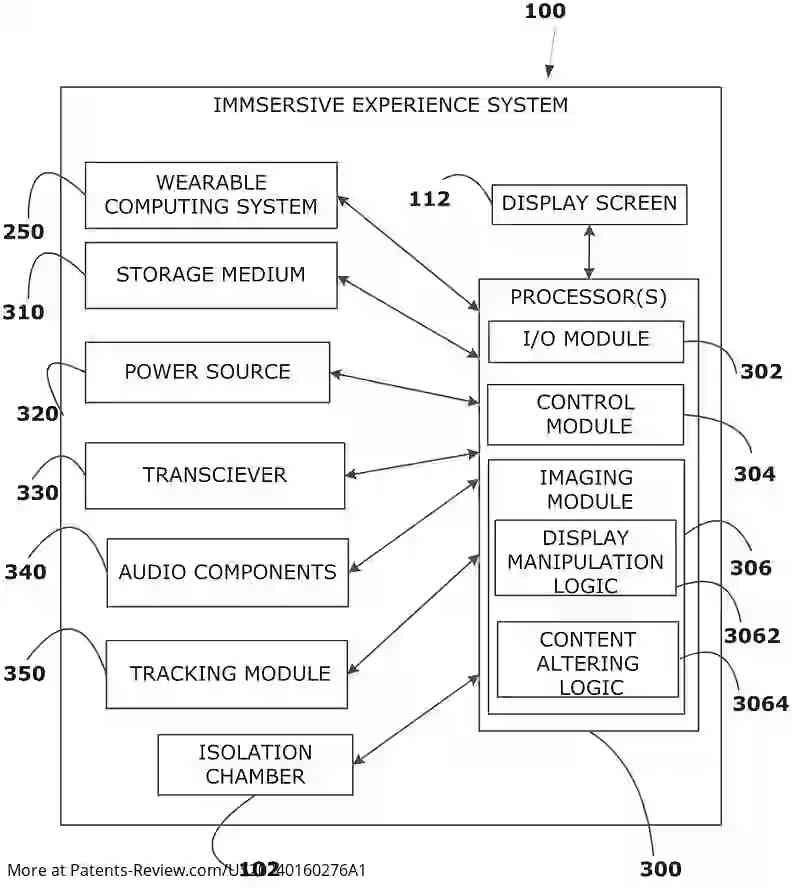

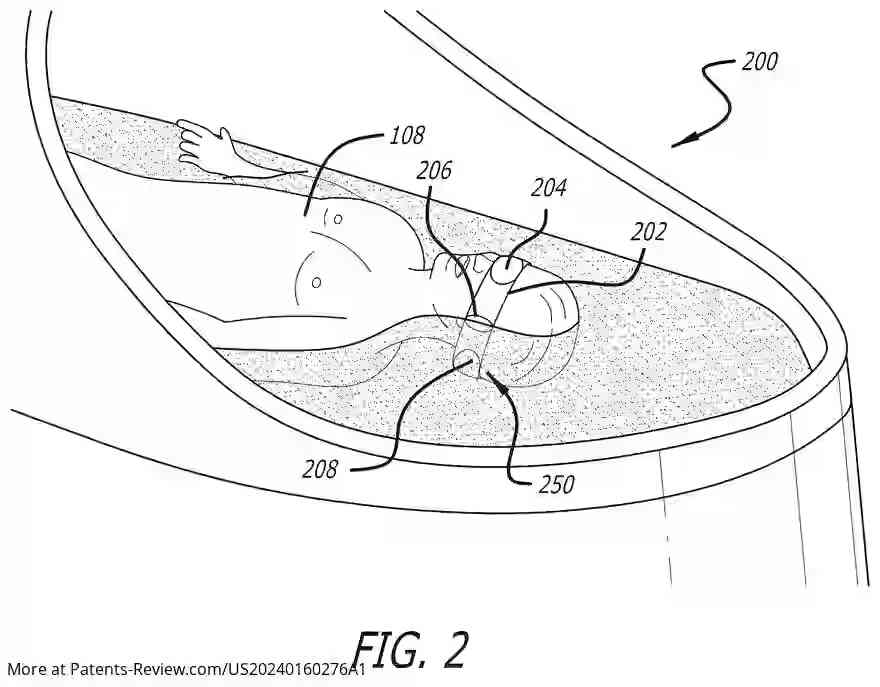

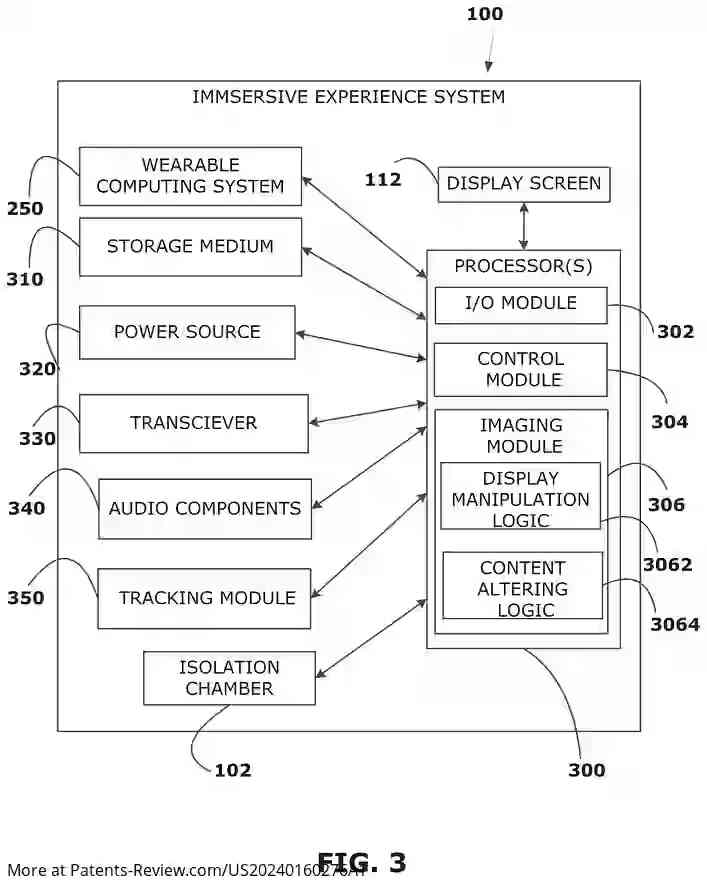

System Components

The system features a sensory deprivation chamber with a base and a lid that accommodates a user floating on a suspension liquid. A display screen provides visual input, covering the user's entire field of view. A processor and storage medium are included for executing programming logic, alongside an audio input device for auditory experiences. The system also incorporates a tracking module with a camera to monitor eye movements.

User Interaction

Programming logic within the system processes user inputs to manipulate the display based on eye-tracking data or thought inputs detected by the visual cortex thought detector. The system can alter content attributes shown on the display in response to these inputs, enhancing the immersive experience.

Wearable Computing System

An optional wearable computing system can be integrated, featuring a flexible frame with tactile elements for feedback. This includes an eye piece that blocks ambient light and provides visual displays. The system processes data through an onboard processor and includes tactile element control logic to respond to display changes.

Methodology and Storage

The method involves displaying visual content via a wearable device's display screen and providing synchronized tactile feedback. This feedback is mapped to specific parts of an avatar in the visual content. Movements of eyes and facial muscles are detected to execute tasks such as display manipulation or avatar interaction. A non-transitory storage medium contains instructions for executing these processes.