REAL-TIME SYSTEM FOR SPOKEN NATURAL STYLISTIC CONVERSATIONS WITH LARGE LANGUAGE MODELS

US20240169974

2024-05-23

Physics

G10L13/10

Inventors:

Applicant:

Drawings (4 of 8)

Smart overview of the Invention

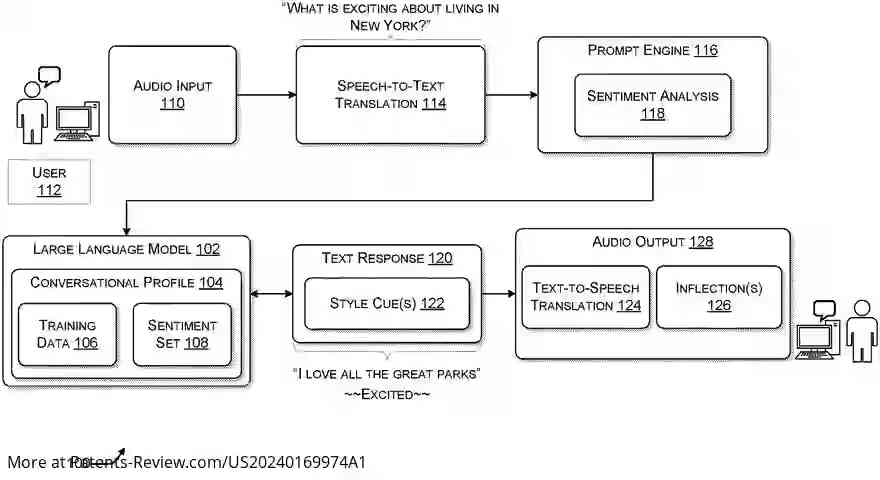

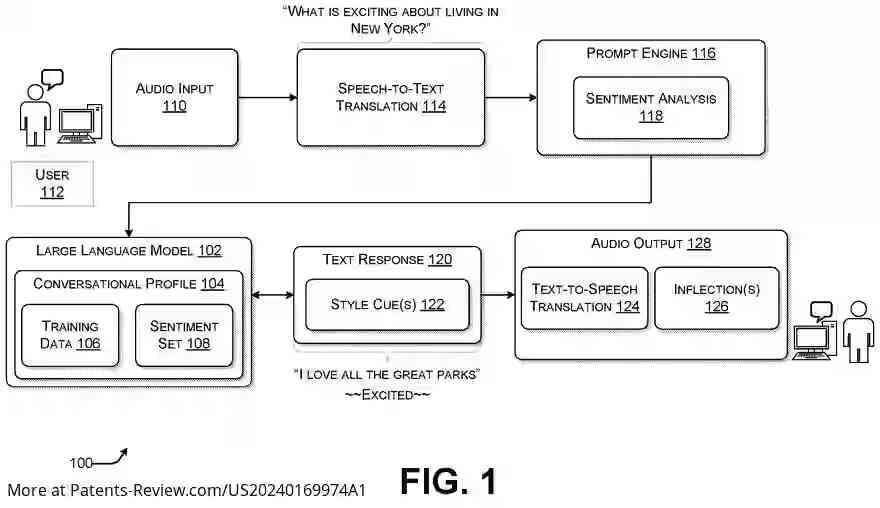

The patent application discusses a system enabling real-time spoken conversations with large language models. Unlike traditional interactions limited to text, this system allows users to engage in fully spoken dialogues. It achieves this by converting speech audio input into text and analyzing the sentiment expressed by the user. A large language model, trained on example conversations, generates a text response and a style cue to express emotion according to the user's sentiment. The text-to-speech engine then interprets these elements to create an audio output that simulates human conversation.

Background

Large language models have advanced significantly, offering capabilities in various domains such as vision, speech, and language processing. They utilize self-attention mechanisms to understand context and generate new content. However, current interaction methods with these models are mostly text-based, limiting their application scope and accessibility for users lacking technical expertise. This invention addresses the need for more interactive and intuitive interfaces with large language models.

System Functionality

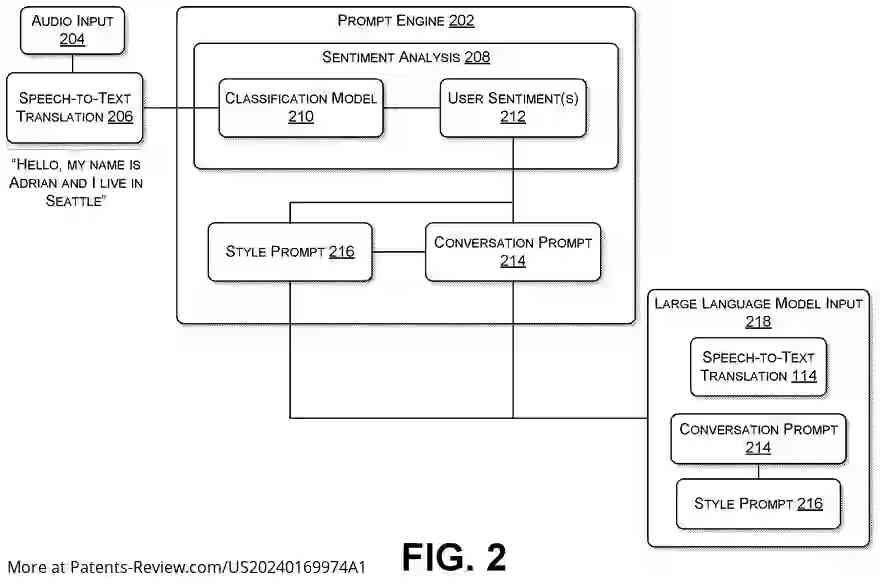

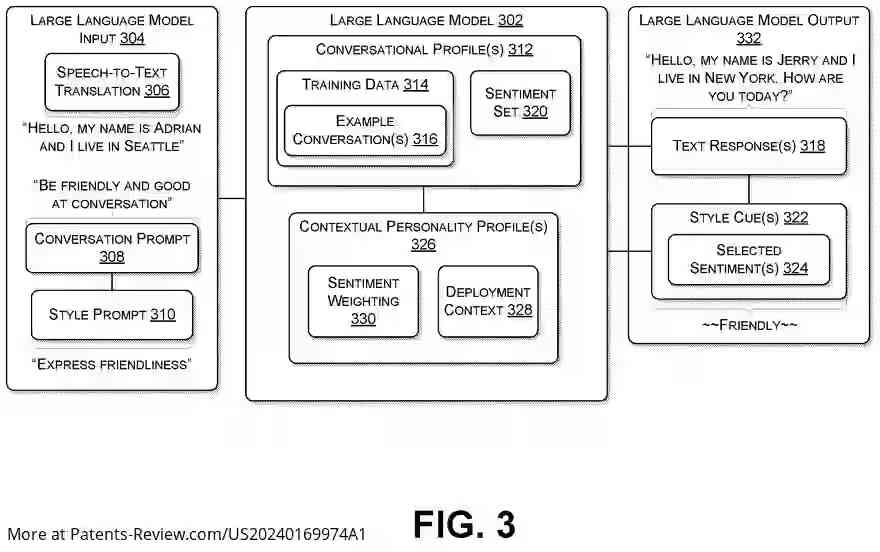

The system introduces a natural language interface for spoken interactions by employing a prompt engine that analyzes user speech inputs to determine sentiment. This sentiment guides the large language model's responses within a conversational framework. Responses include style cues that enable the expression of emotions, providing a more engaging and lifelike conversational experience compared to text-only interactions.

Technical Implementation

A conversational profile is configured for the large language model, comprising training data and a sentiment set. The model receives speech audio input from users, which is converted into text through speech-to-text translation. The prompt engine processes this translation to perform sentiment analysis, considering factors like word choice and vocal tone. Using this analysis, the model generates a text response and selects an appropriate sentiment or style cue.

Example Interaction

An example illustrates how a user asking about New York's excitement results in the system determining an "excited" sentiment from the user's input. The model generates a response like "I love all the great parks," accompanied by an "excited" style cue. This response is converted into audio output using text-to-speech technology, with inflection added to convey excitement, thus creating an immersive conversational experience.