CONTEXT-AWARE END-TO-END ASR FUSION OF CONTEXT, ACOUSTIC AND TEXT PRESENTATIONS

US20240185844

2024-06-06

Physics

G10L15/18

Inventor:

Assignee:

Applicant:

Drawings (4 of 7)

Smart overview of the Invention

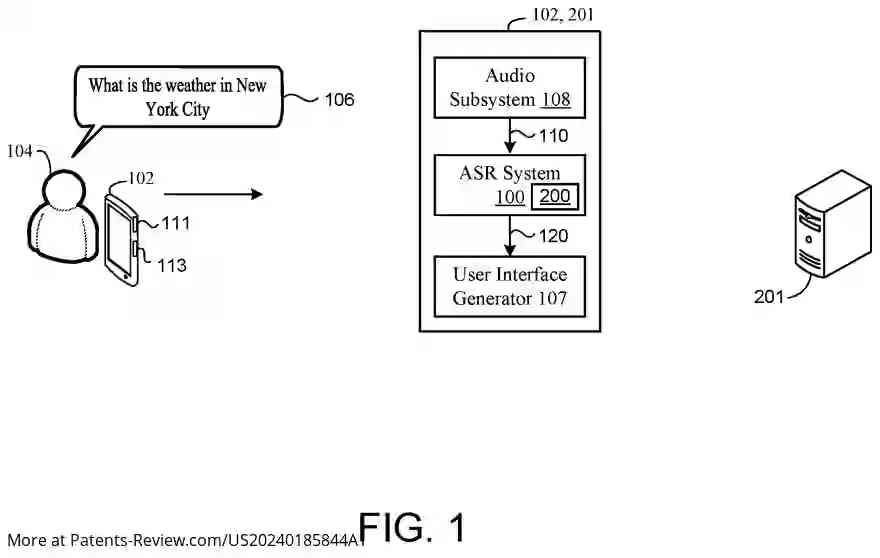

This disclosure pertains to the field of automatic speech recognition (ASR), specifically focusing on context-aware end-to-end ASR systems that integrate context, acoustic, and text representations. The innovation aims to enhance the accuracy and efficiency of speech recognition by considering contextual information from previous utterances alongside current audio inputs.

Background

Traditional ASR systems often process audio by segmenting it into independent parts, which can lead to errors due to the lack of contextual understanding. In scenarios involving long-form speech, these systems may fail to accurately transcribe certain terms that could be correctly identified with the help of contextual clues from earlier parts of the conversation. The described approach seeks to address these limitations by incorporating a more holistic processing method.

Summary

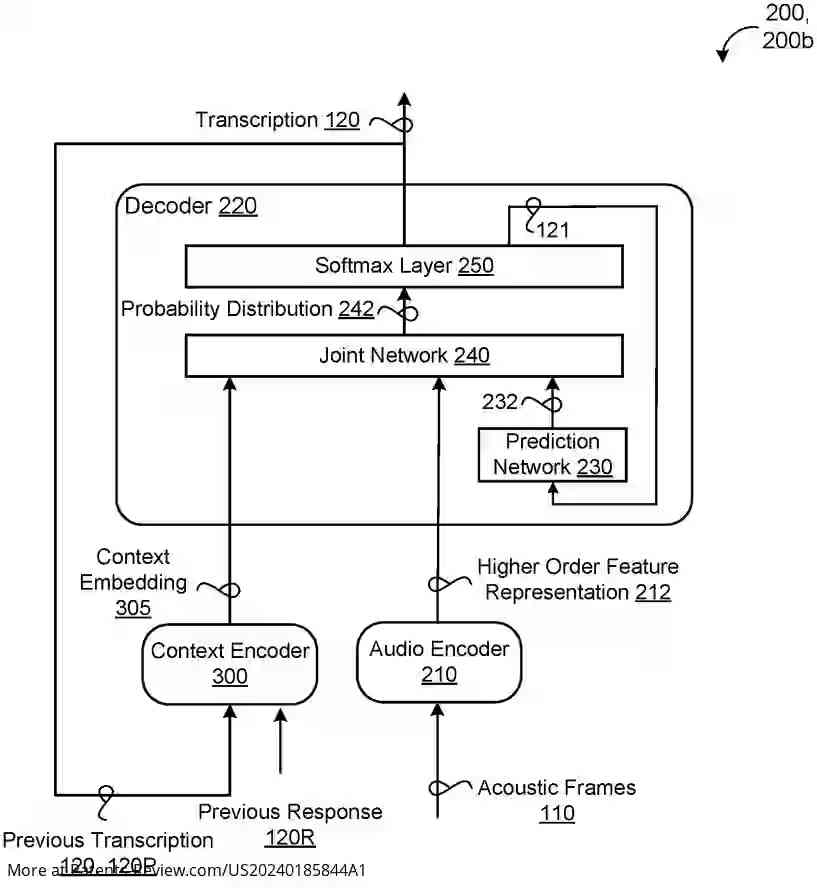

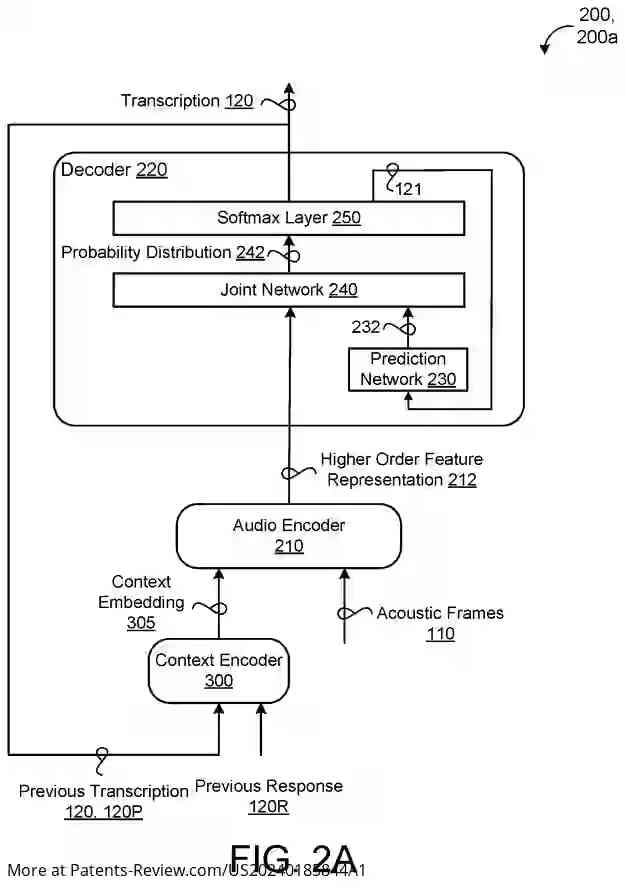

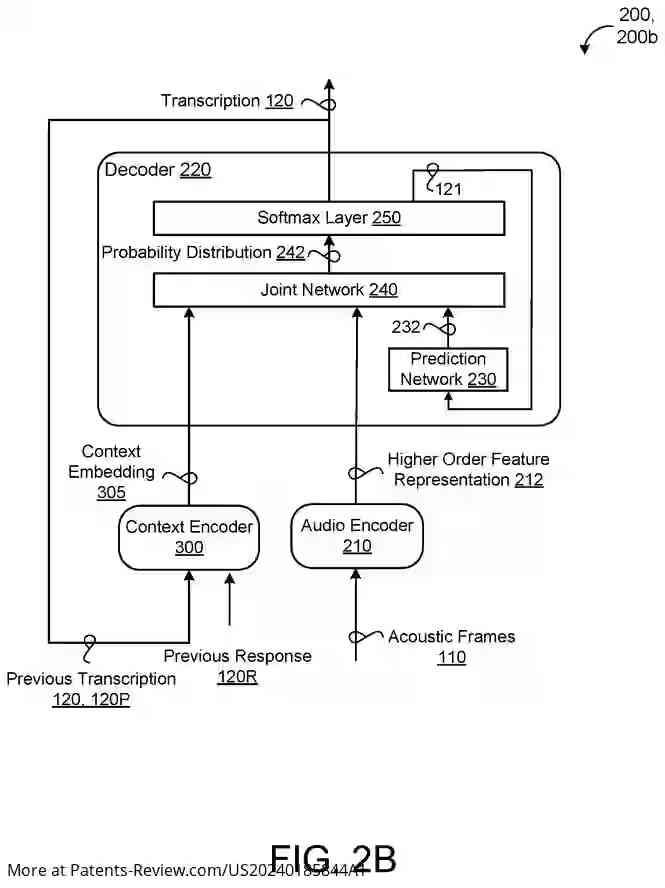

The ASR model described includes several key components: an audio encoder, a context encoder, a prediction network, and a joint network. The audio encoder processes a sequence of acoustic frames to generate higher order feature representations. The context encoder uses previous transcriptions to create context embeddings. The prediction network produces dense representations from non-blank symbols output by a Softmax layer. Finally, the joint network combines these elements to generate probability distributions over potential speech recognition hypotheses.

Optional Features

- The final Softmax layer identifies the most probable speech recognition hypothesis at each step, contributing to the transcription generation.

- The context encoder may utilize a pre-trained Bidirectional Encoder Representations from Transformers (BERT) model, which processes previous transcriptions into wordpiece embeddings.

- A pooling layer within the context encoder applies self-attentive pooling, potentially using multi-head self-attention layers like Conformer or Transformer layers.

- The BERT model can prepend and append classification tokens to wordpiece embeddings, enhancing context embedding generation.

Implementation Details

The described method is executable on data processing hardware, which performs operations like generating feature representations and context embeddings at multiple output steps. The system's design allows for identifying the highest probability hypotheses for transcription. This integration of context and acoustic data aims to improve both the accuracy and latency of ASR systems, providing a more seamless and accurate transcription experience.