AUDIO AND VIDEO TRANSLATOR

US20240194183

2024-06-13

Physics

G10L13/08

Inventors:

Applicant:

Drawings (4 of 14)

Smart overview of the Invention

The invention focuses on translating audio and video using advanced AI systems to enhance accuracy in translations by incorporating speech characteristics such as emotion, pacing, idioms, and tone. This involves unique processors and generators executing specific steps to ensure the translated outputs reflect the original media's nuances. Additionally, the system can manipulate video to make speakers' lip movements appear native to the generated audio.

Background

Traditional audio translation methods are labor-intensive, requiring multiple steps like listening, transcribing, and dubbing. These processes often result in poor synchronization between translated audio and video lip movements. There is a need for a more efficient system that addresses these challenges by improving synchronization and reducing human intervention.

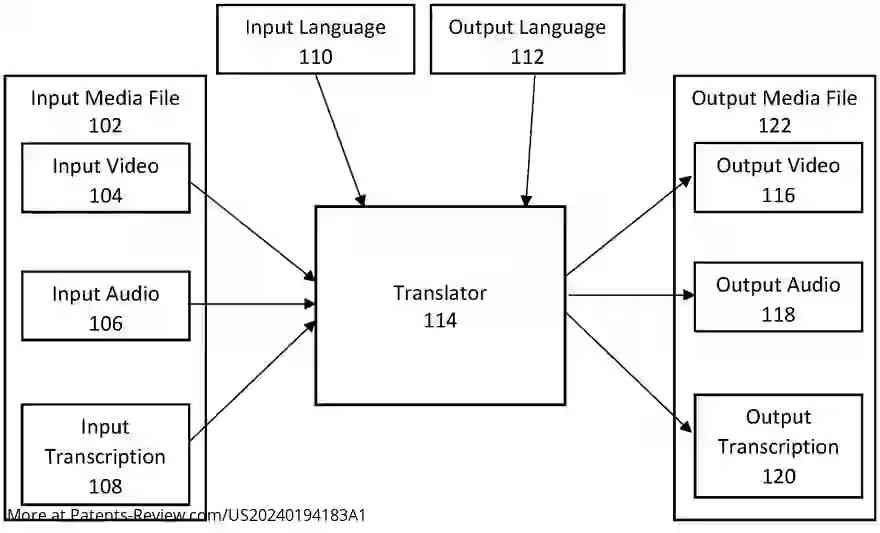

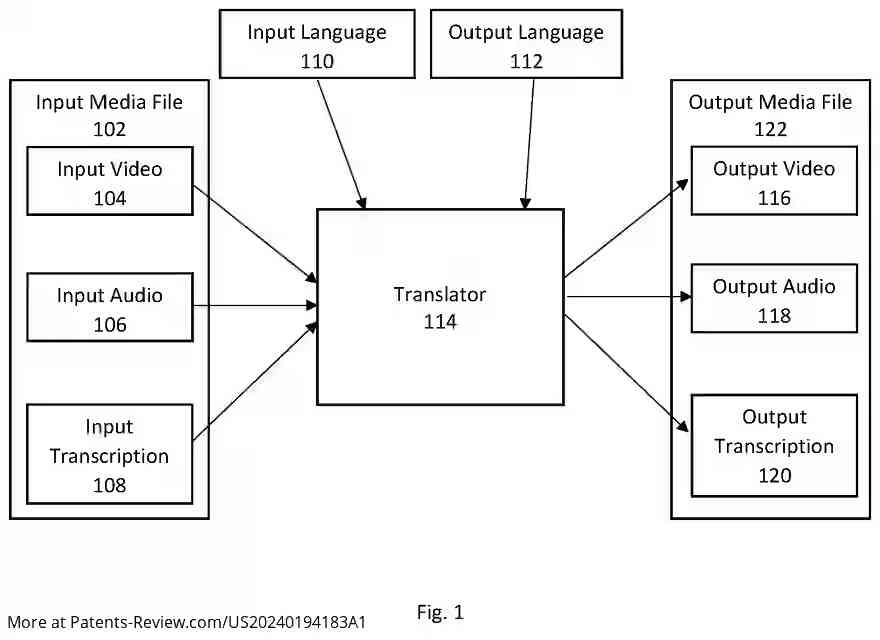

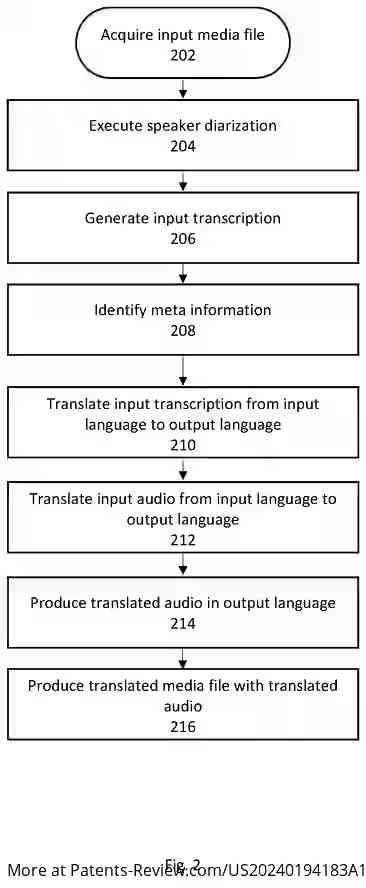

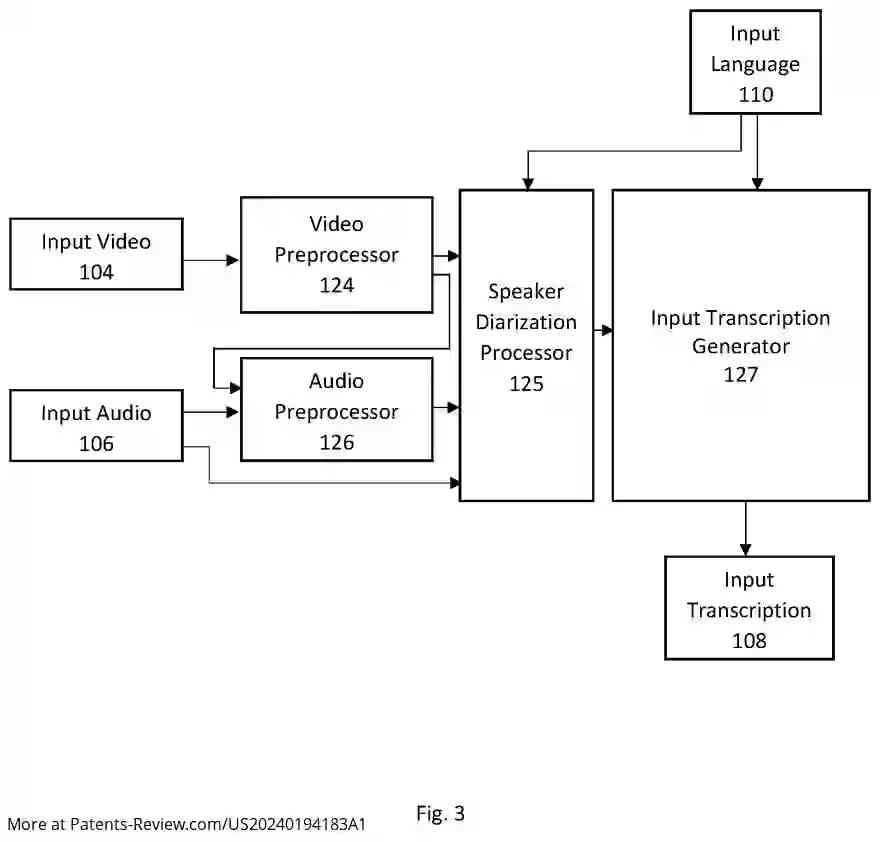

System and Method

The method begins by acquiring an input media file containing audio in a specific language, with optional video. It identifies an output language different from the input language. Preprocessing steps involve separating vocal streams, reducing noise, and capturing lip movement data. The input audio is segmented into vocal segments with speaker identification and pacing details for each word or phoneme.

Translation Process

An AI system generates a transcription of the input audio, including sentiment analysis and anatomical tracking data. Meta information such as emotions and tone is also extracted. This information is translated into the output language while maintaining similar emotion and pacing. The translated transcription is used to generate new audio, ensuring minimal difference in phonetic timing compared to the original.

Output Generation

The final step involves creating translated audio from the transcription and meta information. The system can stitch these segments into a cohesive audio file and sync it with the video to produce a seamless translation where lip movements match the new audio. This comprehensive approach addresses prior inefficiencies in translation technology.