ENHANCED AUDIOVISUAL SYNCHRONIZATION USING SYNTHESIZED NATURAL SIGNALS

US20240195949

2024-06-13

Electricity

H04N17/00

Inventors:

Assignee:

Applicant:

Drawings (4 of 8)

Smart overview of the Invention

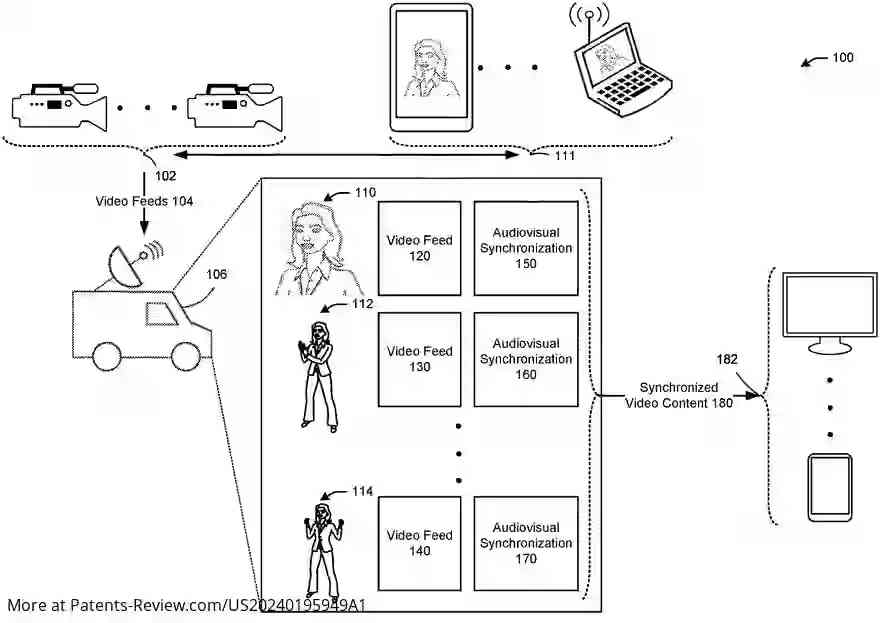

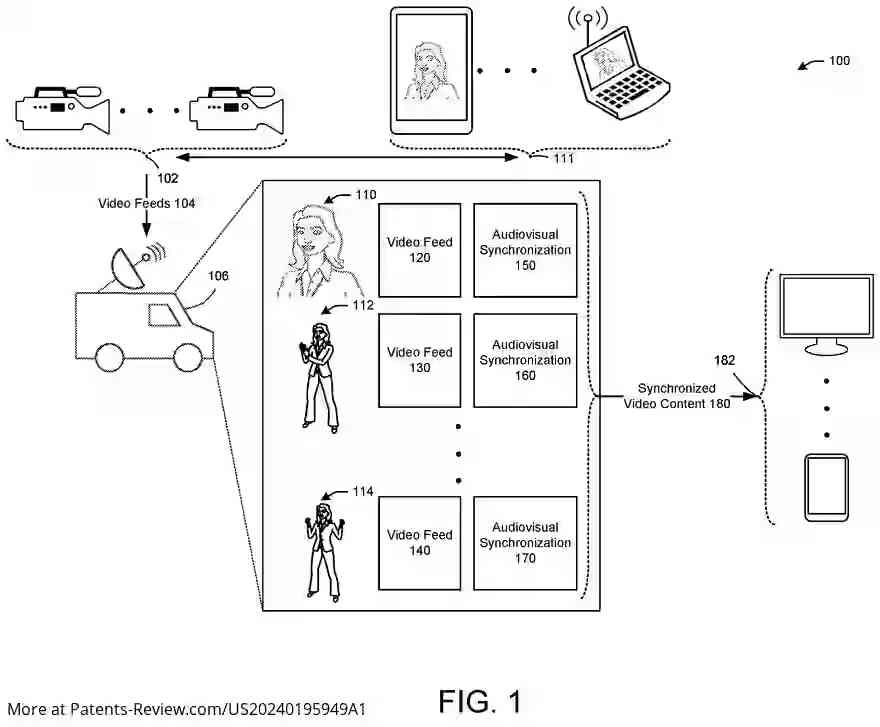

Devices, systems, and methods are developed to enhance audiovisual synchronization using synthesized natural signals. These methods involve capturing audio and video from cameras at events such as live streams. Before distributing this content to user devices, synchronization techniques detect discrepancies between audio and video. Specifically, these techniques identify when spoken words do not align with lip movements, allowing for corrections before the content reaches viewers.

Existing Techniques and Challenges

Traditional synchronization methods often require manual intervention or use of pre-defined patterns like QR codes to measure delays between audio and video signals. These methods lack customization for specific streams and may not align with the content being captured. The new approach synthesizes content that mimics natural signals, such as a talking head or finger snaps, which can be recognized easily and tailored to the specific stream being captured.

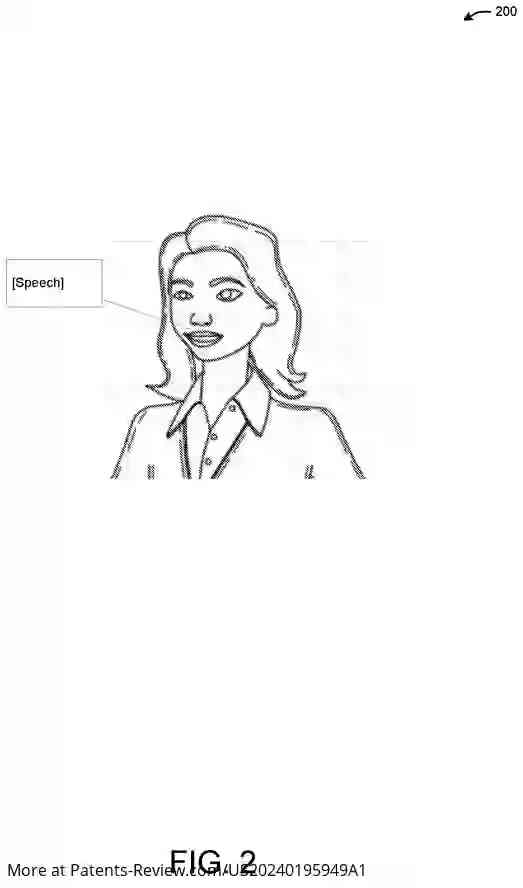

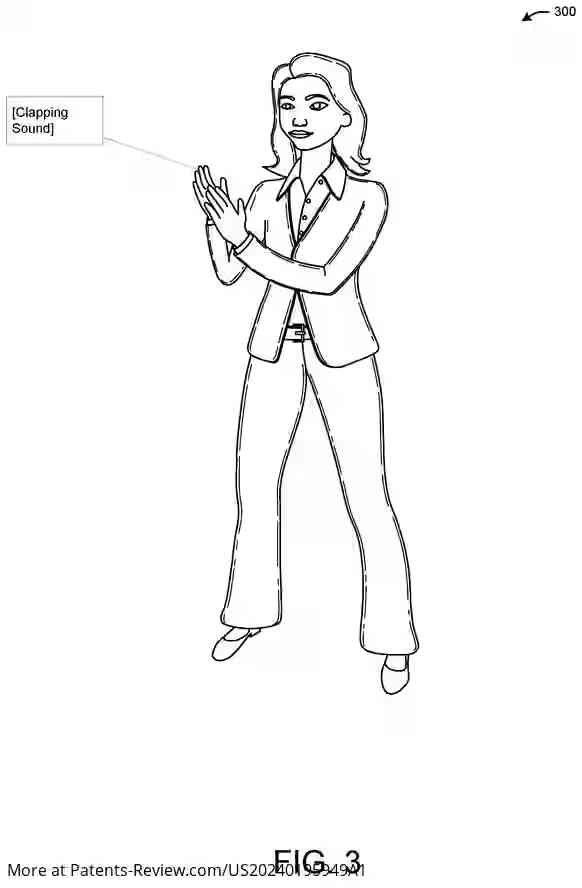

Synthesized Content

Synthesized signals can include talking heads, claps, or snaps, which are recognizable and repeatable patterns. These signals can be generated on-the-fly and tailored to different cameras or streams. This approach improves detection accuracy by providing continuous signals rather than discrete ones. The synthesized content can be presented on devices like smartphones or tablets to cameras, allowing for precise synchronization analysis.

Applications and Benefits

The use of synthesized content allows for more accurate audiovisual synchronization checks without requiring human presence in front of cameras. It supports a wide range of voice sequences and languages, making it versatile for various contexts. The synthesized signals can be introduced as pre-event objects, ensuring they do not appear in actual broadcasts while still enabling synchronization checks across multiple camera feeds.

Detection Techniques

Advanced methods such as machine learning models can be employed to detect audiovisual synchronization issues by transforming video into discrete audiovisual objects and localizing sound sources. Phoneme recognition correlates lip movements with expected sounds, enhancing detection accuracy. These techniques help identify synchronization errors between synthesized video and audio, paving the way for precise content delivery.