UTILIZING A DIFFUSION NEURAL NETWORK FOR MASK AWARE IMAGE AND TYPOGRAPHY EDITING

US20240355018

2024-10-24

Physics

G06T11/60

Inventors:

Applicant:

Drawings (4 of 13)

Smart overview of the Invention

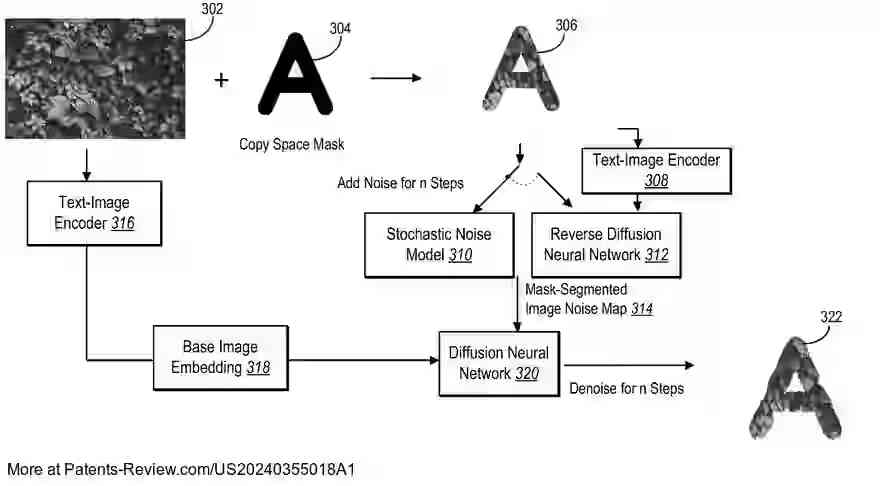

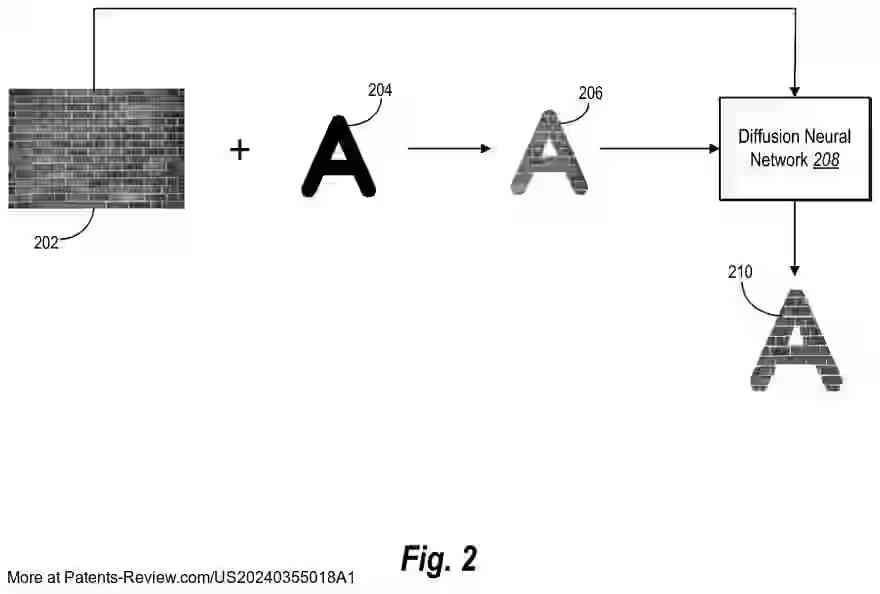

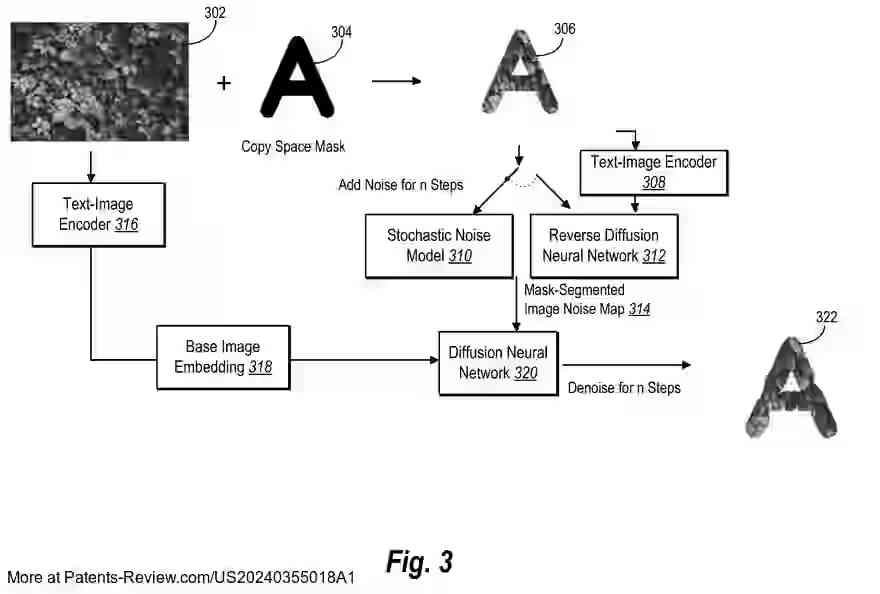

The patent application pertains to a novel system employing a diffusion neural network for mask-aware image and typography editing. This system integrates a text-image encoder to create a base image embedding from an initial digital image. It then combines this with a shape mask to form a mask-segmented image. The approach employs noising steps from a diffusion model to produce a noise map, which is used alongside the base image embedding to create stylized images that align with the shape mask.

Background

Advancements in digital image editing have led to the use of generative machine learning models, such as diffusion neural networks, for creating images from text inputs. However, these conventional systems often lack flexibility and accuracy. They typically apply masks within a latent space during denoising, leading to unrealistic results. The patent addresses these limitations by proposing a system that enhances flexibility and realism in image editing.

Technical Solution

The proposed system enables mask extraction and noise addition to generate stylized images using a neural generation model. It creates a noise map from the mask-segmented image, which is then denoised using a diffusion neural network conditioned on the noise map. This process allows for the generation of stylized images and typography that reflect the base image's style while adhering to the shape mask's contours. Additionally, by adjusting structural weights, the system can produce animations from varying stylized images.

Functional Improvements

- The system enhances functionality by allowing flexible modification of structural weights during image generation.

- It supports different diffusion noising models for varied stylized image outcomes.

- Text prompts can be utilized to generate base digital images for further stylization.

- Realism is improved as the system generates images that naturally incorporate base image characteristics beyond strict shape mask boundaries.

Efficiency and Quality

The system improves efficiency by eliminating the need for prior diffusion neural networks, instead utilizing a trained text-image encoder for generating base image embeddings. This results in realistic and high-quality outputs for both image-based and text-based style prompts. Users can select preferred noising techniques and structural weights, providing personalized and efficient styling options.