SYSTEM AND METHOD FOR RECONSTRUCTION OF A THREE-DIMENSIONAL HUMAN HEAD MODEL FROM A SINGLE IMAGE

US20250014274

2025-01-09

Physics

G06T17/00

Inventors:

Assignee:

Applicant:

Drawings (4 of 9)

Smart overview of the Invention

The invention describes a system and method for creating a three-dimensional (3D) model of a human head from a single two-dimensional (2D) image. This process involves using a series of neural networks to extract features from the image, predict various parameters related to the 3D structure, and generate a detailed UV texture map. The ultimate goal is to produce a high-quality 3D face model that can be used in various applications such as gaming, movie production, and e-commerce.

Key Components

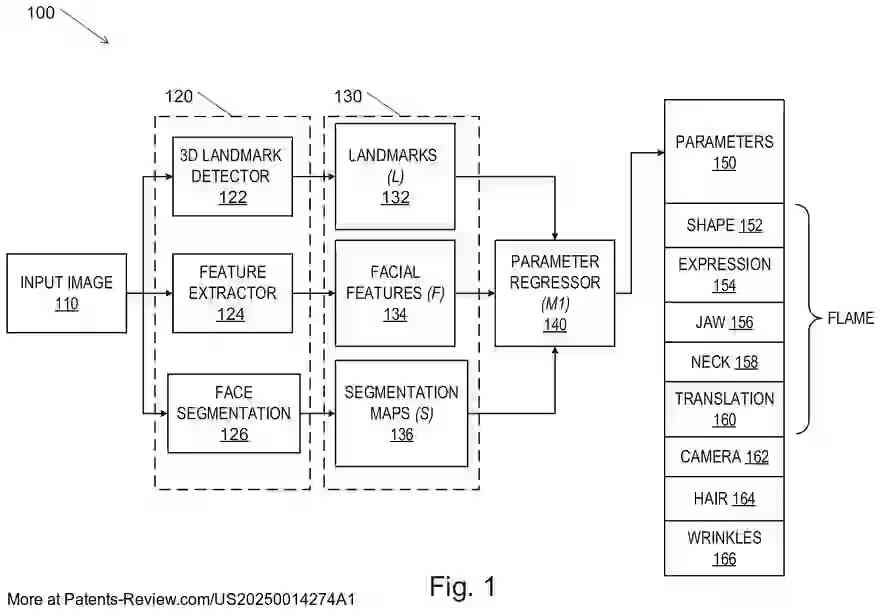

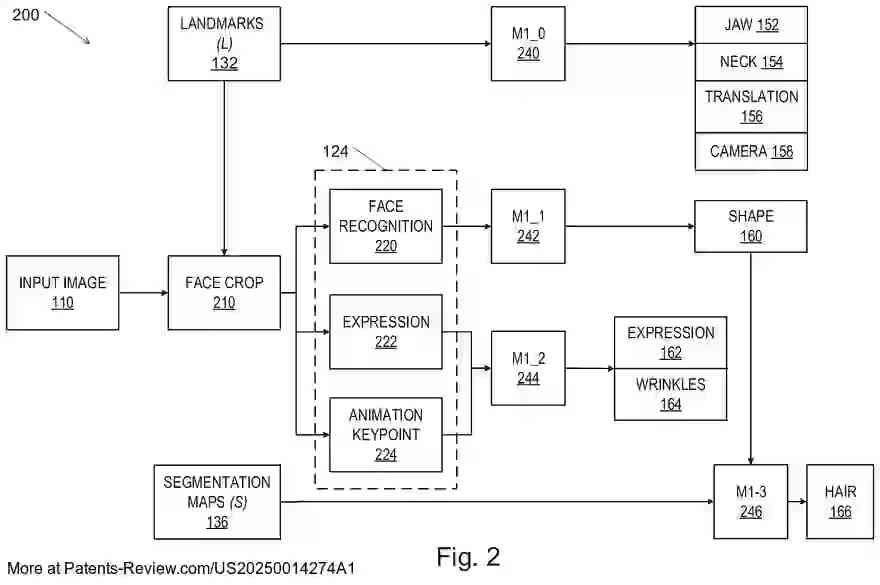

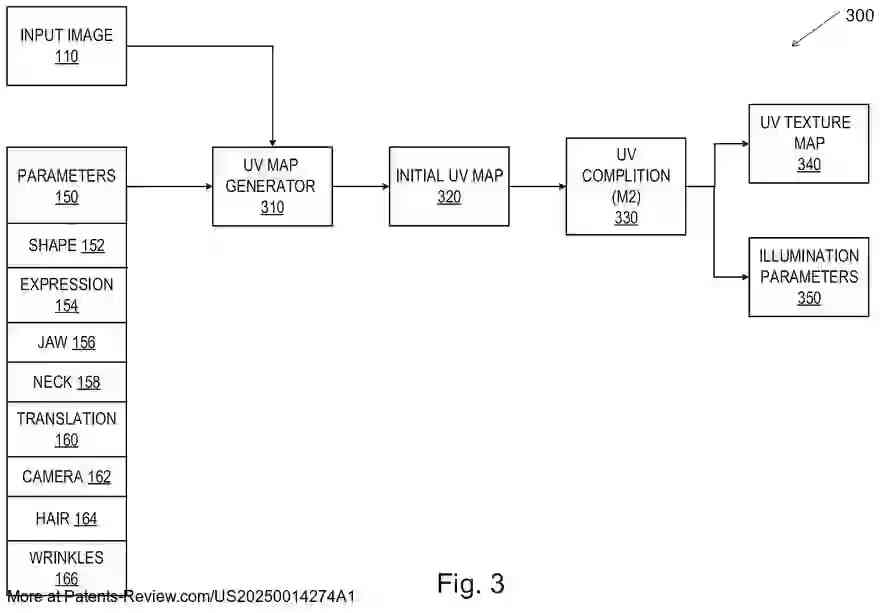

The process begins by inputting the 2D image into preparatory networks that extract 3D landmarks, facial features, and segmentation maps. These outputs are then fed into a parameter regressor network, which predicts parameters for the 3D face model, camera settings, hair details, and facial wrinkles. An initial UV texture map is created using these parameters and the original image, which is then refined by a UV completion network to produce a full UV texture map.

Technical Specifications

The system utilizes a combination of machine learning models, including neural networks like CNNs and ResNets. These models are trained using synthetic images generated from predefined parameters to enhance accuracy. The parameter regressor network consists of several subnetworks that handle different aspects of the face model such as jaw, neck, shape, and expression parameters.

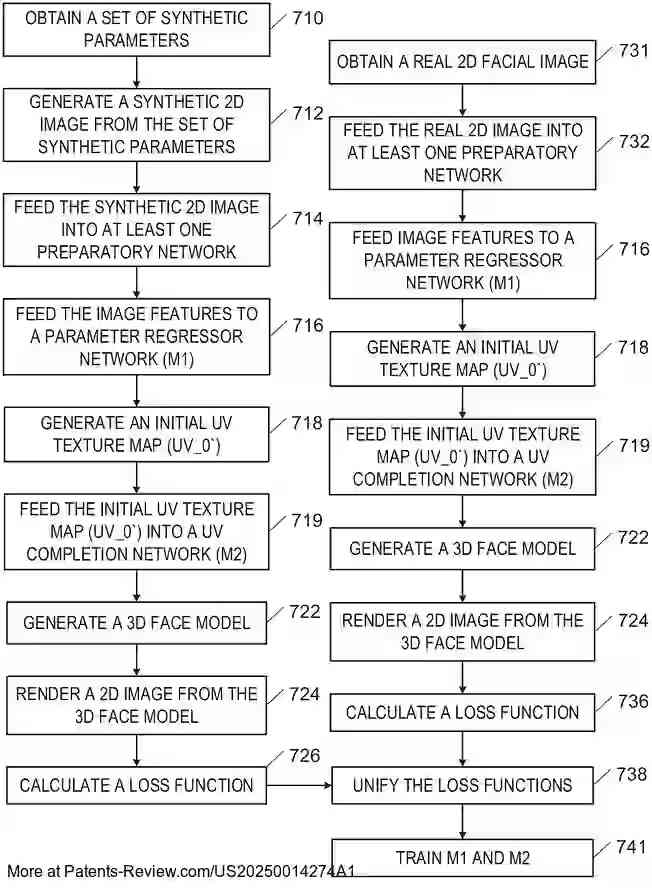

Training and Optimization

Training involves comparing rendered images from the 3D model with the original input image using various loss functions. These functions include regression loss, perceptual loss, and reconstruction loss. The networks are optimized iteratively to minimize these losses, ensuring that the final 3D model closely resembles the input image in both shape and texture.

Applications and Benefits

This technology offers significant advancements in computer graphics by enabling detailed 3D reconstructions from minimal data. It has broad applications in fields requiring realistic human models, such as virtual reality and animation. By improving the quality of single view reconstructions, it addresses limitations found in previous methodologies, providing more lifelike models with enhanced detail.