AUDIOVISUAL DEEPFAKE DETECTION

US20250037507

2025-01-30

Physics

G06V40/40

Inventors:

Assignee:

Applicant:

Drawings (4 of 9)

Smart overview of the Invention

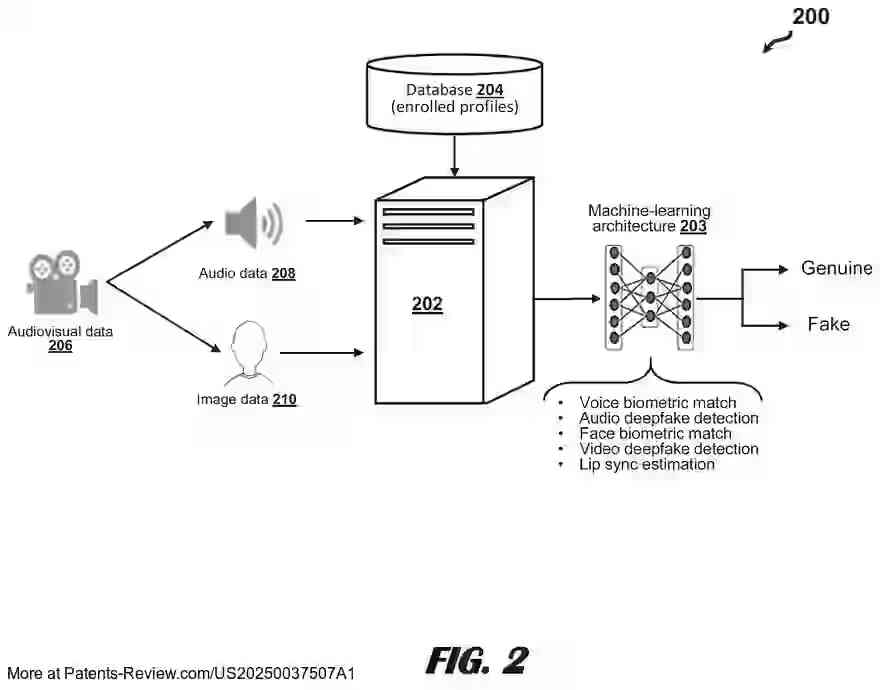

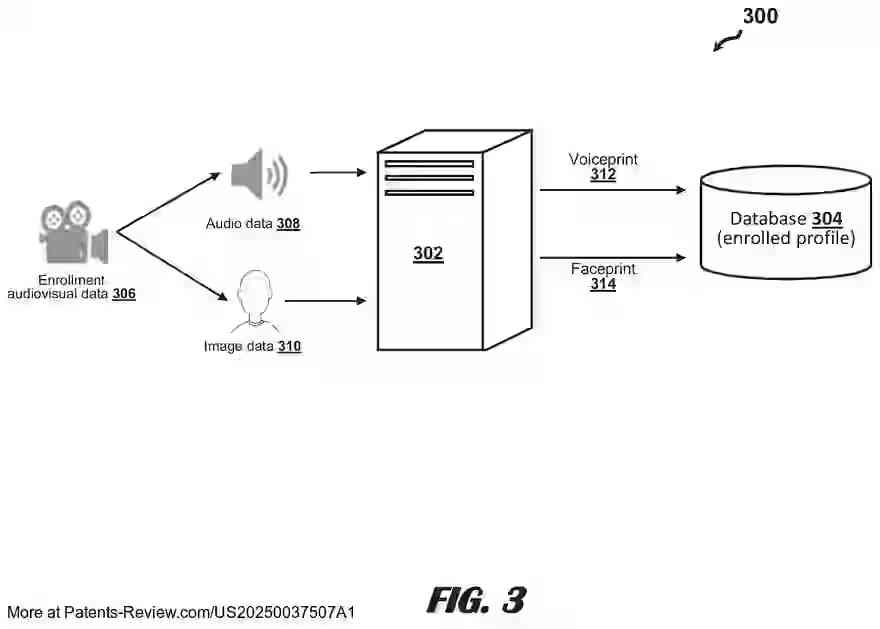

The patent application outlines a machine-learning architecture designed for biometric-based identity recognition and deepfake detection. This system integrates speaker and facial recognition with deepfake detection capabilities, analyzing both audio and visual data to assess the authenticity of audiovisual content. The architecture is composed of layers that generate multiple scores, including those for speaker and facial recognition, deepfake detection, and lip-sync estimation.

Technical Field

The application addresses the growing challenge of detecting deepfakes in audiovisual data, which are increasingly used to spread misinformation or impersonate individuals. These deepfakes can be particularly problematic for systems that rely on audiovisual data for user authentication. The proposed solution offers a comprehensive approach by evaluating both audio and visual components simultaneously, thus improving the accuracy of deepfake detection.

System Architecture

The machine-learning architecture comprises various scoring components, including sub-architectures for speaker and facial deepfake detection, speaker and facial recognition, and lip-sync estimation. It extracts low-level features from audiovisual data to generate scores that determine the likelihood of deepfake content and verify the claimed identity in the video. This integrated approach enhances identity recognition and verification for both audio and visual data.

Implementation

The system includes a computer-implemented method where a processor obtains an audiovisual data sample, applies the machine-learning architecture to generate similarity and deepfake scores, and produces a final output score indicating the likelihood of genuine content. This method allows for efficient processing of complex audiovisual data to detect potential deepfakes.

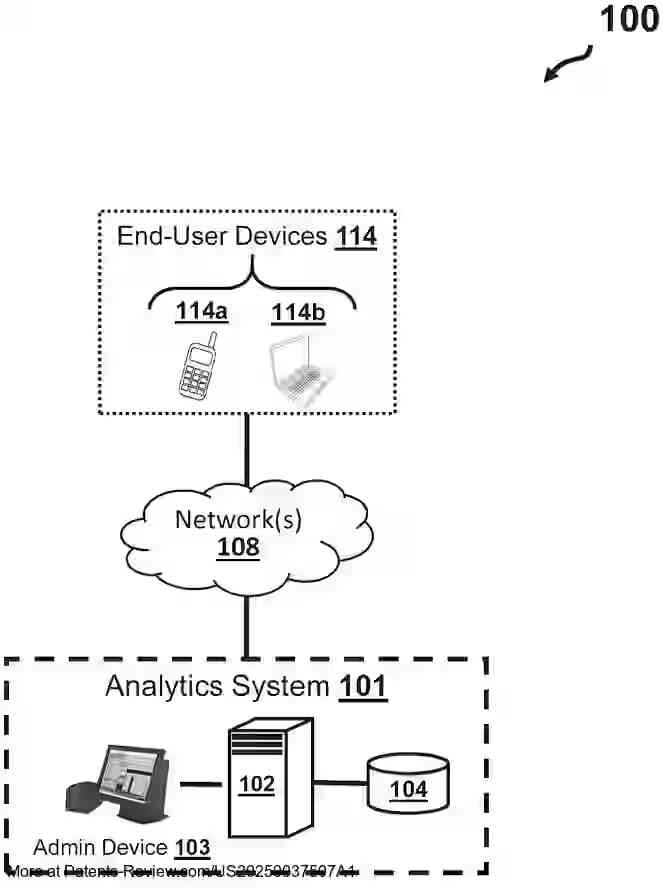

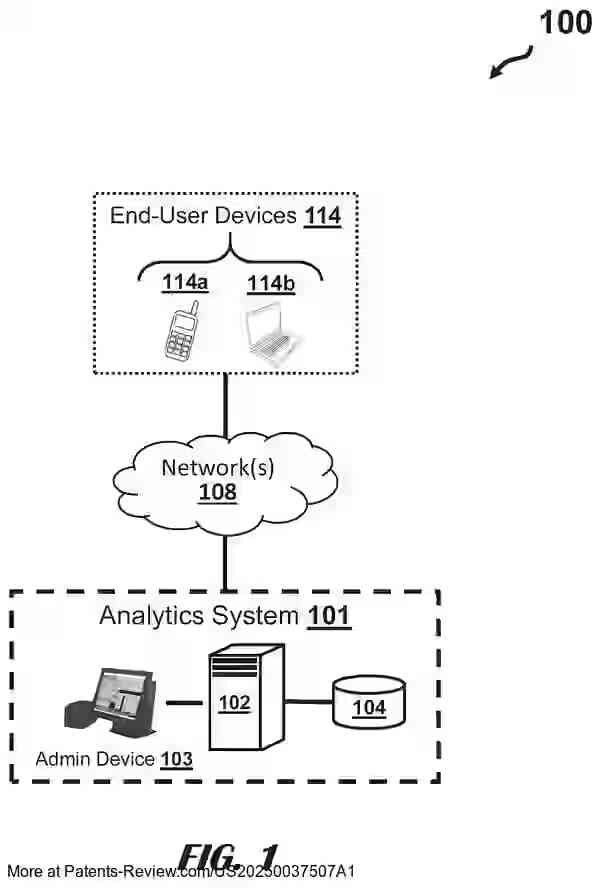

System Components

The system consists of an analytics system connected to end-user devices via various networks. The analytics server processes audiovisual data to recognize voices and faces or detect deepfakes. It communicates over networks such as LAN, WAN, or the Internet using protocols like TCP/IP. The analytics server outputs scores indicating whether the audiovisual input is genuine or spoofed.